Zombodb sits near the top of the list of things that bite production PostgreSQL teams, and yet most teams handle it the same wrong way. This post walks through what we actually do on production PostgreSQL deployments when ZomboDB comes up, with the SQL we run, the configuration we change, and the guardrails we leave behind.

Quick answer

To handle zombodb in PostgreSQL: baseline current behavior with pg_stat_statements and pg_stat_activity, then narrow the symptom to the right layer (query plan, configuration, autovacuum, replication, or storage). Apply the smallest reversible change, validate against your baseline, and end with a Datadog or Prometheus alert plus a runbook entry. The full SQL and worked examples are below.

What is zombodb?

Zombodb in PostgreSQL is the operational discipline around ZomboDB. It overlaps PostgreSQL extension, pgvector embeddings, and TimescaleDB hypertable, which is why every team eventually wrestles with it. Get it wrong and you get user-visible latency, throughput collapse, or operational risk you didn't budget for.

On the inside, zombodb involves a small set of PostgreSQL subsystems: the buffer manager, the write-ahead log, the planner and statistics collector, autovacuum, and the replication and HA layers. This guide walks through each in the order you should investigate them when a real production problem hits, with the SQL we actually run during Citus distributed engagements.

Why zombodb matters in production

Most PostgreSQL incidents that escalate in production PostgreSQL trace back to zombodb in one of three flavors: a sudden p99 latency cliff, a slow buildup that fires an alert weeks too late, or a regression that lands the moment an apparently unrelated deployment goes out. Each has a different root cause and a different fix.

What makes zombodb tricky is that the symptom rarely points cleanly at the root cause. A latency spike might be vector database, or it might be a noisy neighbor at the storage layer, or it might be an unrelated checkpoint cycle dropping caches. That's why measurement comes before tuning, every single time.

A useful mental model: every PostgreSQL change has a cost, a blast radius, and a reversibility. The cheapest, smallest, most reversible change that actually moves your metric is almost always the right first step. It may not be the change you eventually want in steady state, but it buys you the time and confidence to make the bigger one safely.

How zombodb works in PostgreSQL

PostgreSQL behavior around zombodb is governed by five subsystems. Each can quietly affect throughput in ways that aren't visible from query logs alone.

- Buffer manager. The shared_buffers pool decides what stays hot in PostgreSQL memory versus the OS page cache.

- Write-ahead log. Every change is written to WAL before it touches the heap. Replication, PITR, and crash recovery all depend on it.

- Planner and statistics. The cost-based optimizer interacts with statistics gathered by ANALYZE to choose query plans.

- Autovacuum. Background workers reclaim dead tuples produced by MVCC. Mistuned autovacuum is the single most common cause of time-series database regressions.

- Process model. PostgreSQL forks a backend per connection. work_mem is allocated per-backend, which is exactly the surprise that takes down clusters during connection storms.

Knowing which layer your symptom belongs to determines the fix. A p99 spike caused by checkpoint I/O is configuration. A regression caused by stale planner statistics is operational. A correlation between table growth and write latency is almost always autovacuum starvation. The diagnostic queries below help you place the symptom on this map before you change anything.

How to diagnose zombodb issues

Before changing anything, capture a baseline. PostgreSQL has world-class observability built into the engine itself through the pg_stat_* views, so use it. None of the queries below take locks heavier than ACCESS SHARE, but several scan large system catalogs and should run on a read replica or during a low-traffic window on very large clusters.

Step 1. Install pgvector and create an HNSW index for cosine similarity.

CREATE EXTENSION IF NOT EXISTS vector; CREATE TABLE documents ( id bigserial PRIMARY KEY, body text, embedding vector(1536) ); CREATE INDEX ON documents USING hnsw (embedding vector_cosine_ops) WITH (m = 16, ef_construction = 64);

Read the output with two questions in mind. Does the shape match what you expected? And what's the worst-case row? The shape tells you whether your mental model of the cluster matches reality. The worst-case row tells you where the next surprise will come from in your horizontal scaling workflow.

How to fix zombodb step by step

The fix has three parts: the schema or configuration change itself, the rollout plan, and the validation. Skip any one of them and the problem returns within weeks.

On managed PostgreSQL services like AWS RDS, Aurora, Cloud SQL, and Azure Flexible Server, schema changes still happen via plain SQL. Configuration changes happen through parameter group rebuilds. Some parameters take effect immediately, others require a reboot. Verify with SELECT name, context FROM pg_settings WHERE name = '<param>'; before scheduling the change window.

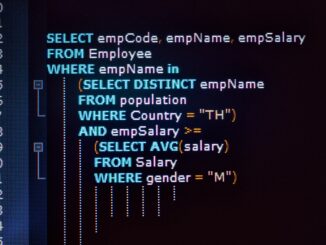

Step 2. Top-k similarity search using the HNSW index.

SET hnsw.ef_search = 100; SELECT id, body, embedding <=> $1 AS distance FROM documents ORDER BY embedding <=> $1 LIMIT 10;

Step 3. Convert a regular table into a TimescaleDB hypertable.

CREATE EXTENSION IF NOT EXISTS TimescaleDB;

CREATE TABLE metrics (

time timestamptz NOT NULL,

device text NOT NULL,

value double precision NOT NULL

);

SELECT create_hypertable('metrics', 'time', chunk_time_interval => interval '1 day');

SELECT add_retention_policy('metrics', interval '90 days');

SELECT add_compression_policy('metrics', interval '7 days');

Step 4. Validation. Re-run your baseline query and compare the results. If the change didn't move the metric you set out to improve, revert before chasing a second hypothesis. Tuning one PostgreSQL parameter at a time is the only way to keep your sanity, and your audit trail, intact.

Production guardrails and monitoring

A change isn't done when it ships. It's done when you have the alert, the runbook entry, and the rollback plan. The difference between a healthy PostgreSQL platform and a chronically firefighting one is whether teams complete this last 20 percent of the work.

- Add a Datadog or Prometheus alert on the metric you just improved at a threshold 20 percent above your new baseline.

- Capture an EXPLAIN (ANALYZE, BUFFERS) for any regressed query into your runbook so the on-call engineer has the next-step diagnostic ready.

- Document the rollback path: the exact SQL or ALTER SYSTEM sequence to restore the prior state if the change misbehaves.

- Set a calendar reminder to re-validate after the next major PostgreSQL version upgrade. Planner behaviors and default GUC values do change.

- Record the pg_stat_statements query ID and a representative plan in your team wiki so you can compare against future regressions in pg_partman partitioning.

- Schedule a follow-up review 30 days after the change to confirm the improvement persisted under realistic production traffic.

Going deeper with cross-checks

Once the basic fix is in place, the next layer of validation cross-checks against complementary signals. The query below is the one we run on production PostgreSQL deployments to confirm the change has propagated everywhere it should.

Distribute a table across a Citus cluster by tenant_id.

CREATE EXTENSION IF NOT EXISTS Citus;

SELECT * FROM citus_add_node('worker1.db', 5432);

SELECT * FROM citus_add_node('worker2.db', 5432);

CREATE TABLE events (

id bigserial,

tenant_id uuid NOT NULL,

occurred_at timestamptz NOT NULL,

payload jsonb

);

SELECT create_distributed_table('events', 'tenant_id');

Common mistakes and anti-patterns

After hundreds of production PostgreSQL deployments we see the same anti-patterns around this topic again and again, across companies, industries, and continents. None are obvious in the moment. Each looked like the right call when someone made it.

- Tuning zombodb by copy-pasting from a 2014 blog post without re-validating against PostgreSQL 14, 15, 16, or 17 behavior.

- Changing more than one PostgreSQL parameter at a time without measurement.

- Forgetting to ANALYZE after a large data load, then wondering why the planner picked a sequential scan over your shiny new index.

- Trusting an unverified backup or untested failover for PostgreSQL ecosystem.

- Treating autovacuum as something to disable rather than something to tune.

- Allowing developers to write production queries with no EXPLAIN review.

PostgreSQL on AWS, Aurora, GCP, Azure

If you're running on AWS RDS, Aurora, Cloud SQL, AlloyDB, or Azure Flexible Server, here's what changes. Schema work is identical to self-managed PostgreSQL. Configuration goes through parameter groups. Some OS-level levers are gone. And Aurora plays by slightly different rules because of its decoupled storage architecture.

Specifics worth memorizing. AWS RDS PostgreSQL on gp3 storage gives you provisioned IOPS, but the maximum is per-volume, not per-instance. That fact surprises customers scaling vCPU and expecting linear I/O. Google AlloyDB's columnar engine is opt-in per table; turning it on is a one-line SQL call, but the analytical workload eligibility rules aren't always obvious until you read the EXPLAIN plan. Azure Database for PostgreSQL Flexible Server exposes a broader set of extensions than RDS or Aurora, including pg_partman, pgvector, TimescaleDB, and Citus on the Citus-flavored variant.

When this approach is the wrong starting point

This technique assumes a roughly normal OLTP PostgreSQL workload with healthy autovacuum. It's the wrong starting point if your workload is dominated by long analytical queries against a Citus or TimescaleDB hypertable, if you run on Aurora's storage-decoupled architecture (where buffer-pool semantics differ), or if the symptom is actually a network or kernel-level issue masquerading as a PostgreSQL problem.

Another pattern we see often. An AI startup's RAG pipeline ran on a separate Pinecone cluster bleeding 7,000 dollars a month. We migrated their embeddings into pgvector with HNSW, kept the same recall, and they retired the entire vector database tier.

Frequently asked questions

Are PostgreSQL extensions safe to run in production?

Trusted extensions from the PostgreSQL community ecosystem are production-grade. Validate maintainer track record, security history, and your managed cloud's compatibility list before standardizing on any extension.

Can Production teams use Citus on AWS RDS PostgreSQL?

No. Citus runs on Microsoft's managed Citus offering (Azure Cosmos DB for PostgreSQL) or on self-managed PostgreSQL. AWS RDS and Aurora do not allow installing the Citus extension.

Should Production teams use pgvector or a dedicated vector database?

For most teams, pgvector eliminates a moving part and keeps retrieval and metadata in one place. A dedicated vector database is worth the operational cost only at billions of vectors with sub-50ms p99 latency requirements.

Does TimescaleDB work alongside Citus?

Not natively in the same database. They are alternative scaling strategies for PostgreSQL. TimescaleDB scales time-series writes vertically with hypertables, Citus scales horizontally across worker nodes.

How do I write my own PostgreSQL extension?

Start from the C-language extension scaffolding in the PostgreSQL source tree. For pure-SQL extensions, use the CREATE EXTENSION packaging with a control file and SQL script. The pgvector source code is an excellent reference model.

Where should I start if I’m new to zombodb for elasticsearch integration with postgresql?

Read this guide end to end, then run the diagnostic SQL queries against a non-production PostgreSQL database to build intuition. Most engineers we coach are productive within a day. Bookmark this page, then move on to the cluster posts linked below for deeper dives.

Further Reading

PostgreSQL ALTER TABLE ADD COLUMN: Hidden Dangers and Production Pitfalls