Unlocking Cassandra Consistency Levels for Better Data Management: Mastering Cassandra Consistency Levels in Distributed Systems

Introduction

Apache Cassandra’s consistency levels are fundamental to understanding how this distributed NoSQL database manages data across multiple nodes. As organizations scale their applications globally, choosing the right consistency level becomes crucial for balancing performance, availability, and data accuracy. This comprehensive guide explores Cassandra’s consistency model and provides practical insights for optimizing your database operations.

This guide focuses on Unlocking Cassandra Consistency Levels to enhance your approach to data management.

This guide emphasizes the importance of Mastering Cassandra Consistency Levels for ensuring reliable data management.

Understanding Cassandra’s Consistency Model

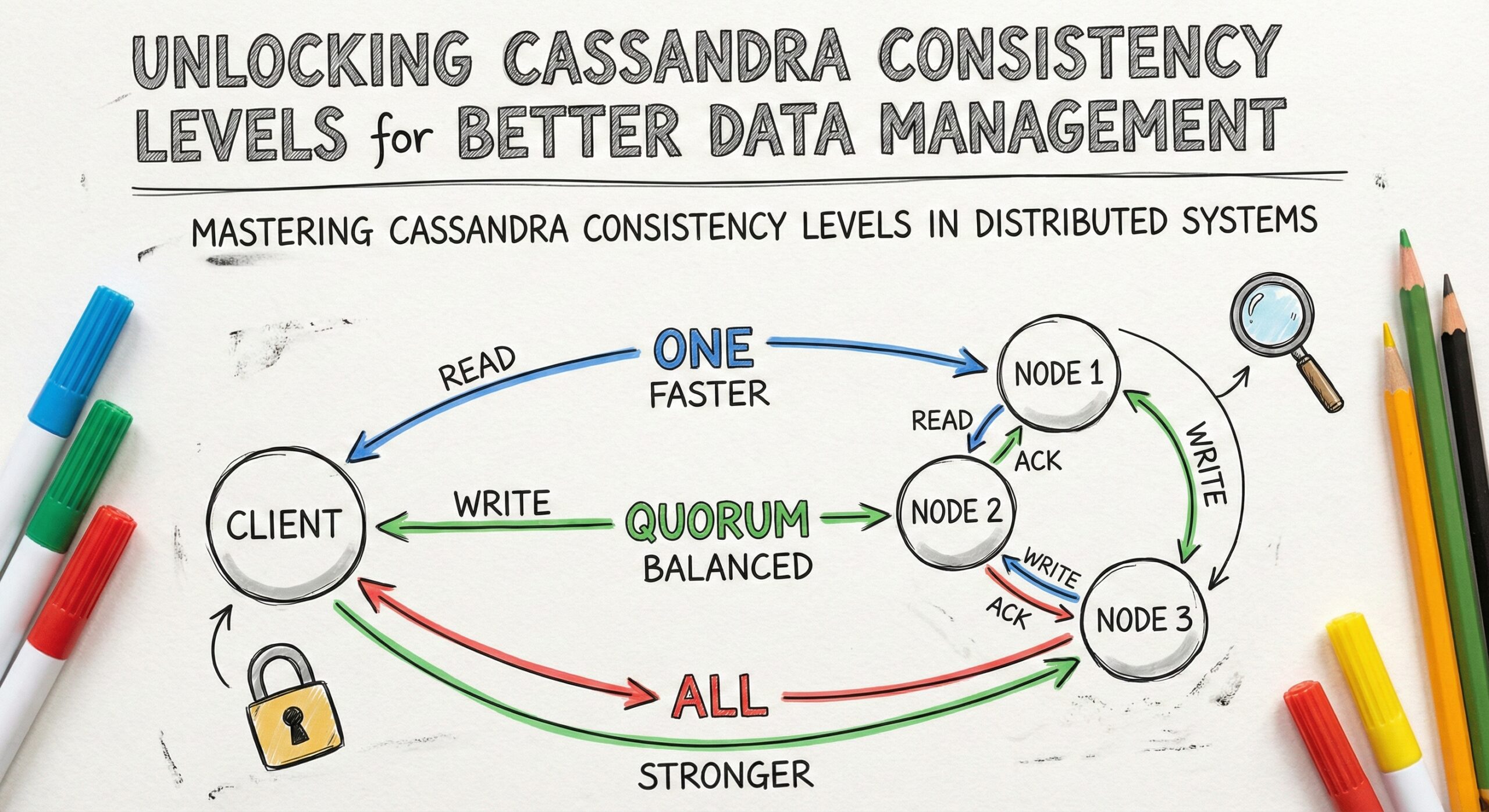

Cassandra operates on an eventually consistent model, allowing you to fine-tune the trade-off between consistency and performance through configurable consistency levels. Unlike traditional ACID databases, Cassandra provides tunable consistency, meaning you can specify different consistency requirements for read and write operations based on your application’s needs.

The consistency level determines how many replica nodes must respond to a read or write operation before it’s considered successful. This flexibility allows developers to optimize for different scenarios, from high-performance applications requiring minimal latency to critical systems demanding strong consistency guarantees.

Write Consistency Levels

ANY

The ANY consistency level provides the lowest latency for write operations but offers minimal durability guarantees. When using ANY, a write is considered successful as soon as it’s written to any node in the cluster, including hinted handoff storage. This level is rarely recommended for production environments due to its weak durability characteristics.

ONE

ONE consistency level requires acknowledgment from only one replica node. This setting provides fast write performance while maintaining basic durability. It’s suitable for applications where write speed is prioritized over immediate consistency across all replicas.

TWO and THREE

These levels require acknowledgment from two or three replica nodes respectively. They offer increased durability compared to ONE while maintaining reasonable performance. These levels are often used in scenarios where you need better consistency guarantees than ONE but don’t require the overhead of stronger consistency levels.

QUORUM

QUORUM is one of the most commonly used consistency levels in production environments. It requires acknowledgment from a majority of replica nodes (more than half). For a replication factor of 3, QUORUM requires 2 nodes to acknowledge the write. This level provides strong consistency while maintaining good availability characteristics.

LOCAL_QUORUM

LOCAL_QUORUM requires a quorum of replica nodes within the local data center to acknowledge the write. This level is particularly valuable in multi-data center deployments where you want strong consistency within a data center while avoiding cross-data center latency for write operations.

EACH_QUORUM

EACH_QUORUM demands a quorum of replica nodes in each data center to acknowledge the write. This level provides the strongest consistency guarantees in multi-data center environments but comes with higher latency due to cross-data center coordination requirements.

ALL

ALL consistency level requires acknowledgment from all replica nodes. While this provides the strongest consistency and durability guarantees, it significantly impacts availability. If any replica node is unavailable, write operations will fail, making this level unsuitable for high-availability requirements.

Read Consistency Levels

ONE

Reading with ONE consistency level returns data from the first responding replica node. This provides the fastest read performance but may return stale data if the responding node hasn’t received recent updates.

TWO and THREE

These levels query two or three replica nodes respectively and return the most recent data based on timestamps. They offer better consistency than ONE while maintaining reasonable performance characteristics.

QUORUM

QUORUM for reads queries a majority of replica nodes and returns the most recent data. This level ensures strong consistency for read operations and is commonly paired with QUORUM writes to achieve strong consistency across the entire system.

LOCAL_QUORUM

LOCAL_QUORUM reads from a quorum of replica nodes within the local data center. This level provides strong consistency within the data center while avoiding cross-data center latency that could impact read performance.

EACH_QUORUM

EACH_QUORUM reads from a quorum of replica nodes in each data center. This level is rarely used for reads as it introduces significant latency without substantial consistency benefits over LOCAL_QUORUM in most scenarios.

ALL

ALL consistency level for reads queries all replica nodes and returns the most recent data. While this provides the strongest consistency guarantees, it severely impacts availability and performance, as any unavailable replica node will cause the read to fail.

SERIAL and LOCAL_SERIAL

These specialized consistency levels are used with lightweight transactions (LWT) to provide linearizable consistency. SERIAL ensures linearizability across all data centers, while LOCAL_SERIAL provides linearizability within the local data center.

Choosing the Right Consistency Level

Performance Considerations

Lower consistency levels like ONE and TWO provide better performance due to reduced network overhead and faster response times. Higher consistency levels like QUORUM and ALL require more network round-trips and coordination, resulting in higher latency.

Availability Trade-offs

Consistency levels directly impact system availability. Lower levels can tolerate more node failures, while higher levels may become unavailable when multiple nodes fail. Consider your application’s availability requirements when selecting consistency levels.

Data Accuracy Requirements

Applications requiring immediate consistency across all replicas should use higher consistency levels like QUORUM or ALL. Systems that can tolerate eventual consistency can benefit from the performance advantages of lower consistency levels.

Best Practices for Production Environments

Standard Recommendations

For most production applications, using QUORUM for both reads and writes provides an optimal balance of consistency, performance, and availability. This combination ensures strong consistency while maintaining good fault tolerance.

Multi-Data Center Deployments

In multi-data center environments, LOCAL_QUORUM is often preferred to avoid cross-data center latency while maintaining strong consistency within each data center. Consider using EACH_QUORUM only when global strong consistency is absolutely required.

Application-Specific Tuning

Different parts of your application may require different consistency levels. Critical data like user authentication might use QUORUM, while less critical data like analytics could use ONE for better performance.

Monitoring and Alerting

Implement comprehensive monitoring to track consistency level performance and failure rates. Set up alerts for scenarios where higher consistency levels might be causing availability issues.

Advanced Consistency Scenarios

Lightweight Transactions

When using Cassandra’s lightweight transactions for conditional updates, SERIAL or LOCAL_SERIAL consistency levels ensure linearizable consistency. These operations have higher overhead but provide ACID-like guarantees when needed.

Batch Operations

Batch operations can use different consistency levels, but be aware that higher consistency levels for large batches can significantly impact performance. Consider breaking large batches into smaller operations with appropriate consistency levels.

Time-Sensitive Data

For time-sensitive applications, consider using lower consistency levels with appropriate application-level conflict resolution mechanisms rather than relying solely on database-level consistency.

Troubleshooting Consistency Issues

Common Problems

Consistency-related issues often manifest as stale reads, write timeouts, or unavailable exceptions. Understanding the relationship between your consistency levels, replication factor, and cluster health is crucial for diagnosing these problems.

Diagnostic Techniques

Use Cassandra’s built-in tools like nodetool to monitor cluster health and repair operations. Regular repairs help ensure data consistency across replicas, especially when using lower consistency levels.

Recovery Strategies

When consistency issues arise, consider temporarily adjusting consistency levels, running repair operations, or implementing application-level consistency checks to maintain data integrity.

Performance Optimization

Consistency Level Impact on Throughput

Lower consistency levels generally provide higher throughput due to reduced coordination overhead. Profile your application with different consistency levels to find the optimal balance for your specific use case.

Network Topology Considerations

Your network topology significantly impacts consistency level performance. Ensure adequate network bandwidth and low latency between replica nodes, especially when using higher consistency levels.

Client-Side Optimization

Implement proper retry logic and connection pooling in your client applications to handle consistency level-related timeouts and failures gracefully.

Conclusion

Mastering Cassandra’s consistency levels is essential for building robust, scalable distributed applications. The key is understanding your application’s specific requirements for consistency, performance, and availability, then selecting appropriate consistency levels that align with these needs.

Start with QUORUM for both reads and writes in production environments, then adjust based on your specific performance and consistency requirements. Regular monitoring and testing will help you optimize these settings as your application evolves and scales.

Remember that consistency levels are just one part of Cassandra’s broader distributed architecture. Combine proper consistency level selection with appropriate data modeling, cluster sizing, and operational practices to achieve optimal results in your distributed database environment.

Further Reading

- Enterprise Database Systems Support

- Remote DBA Support

- NoSQL Support

- MongoDB Support

- ChistaDATA University